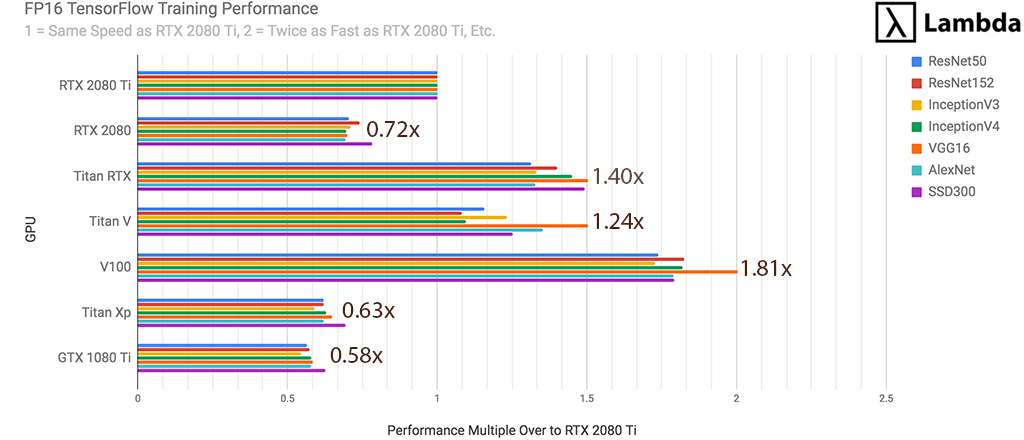

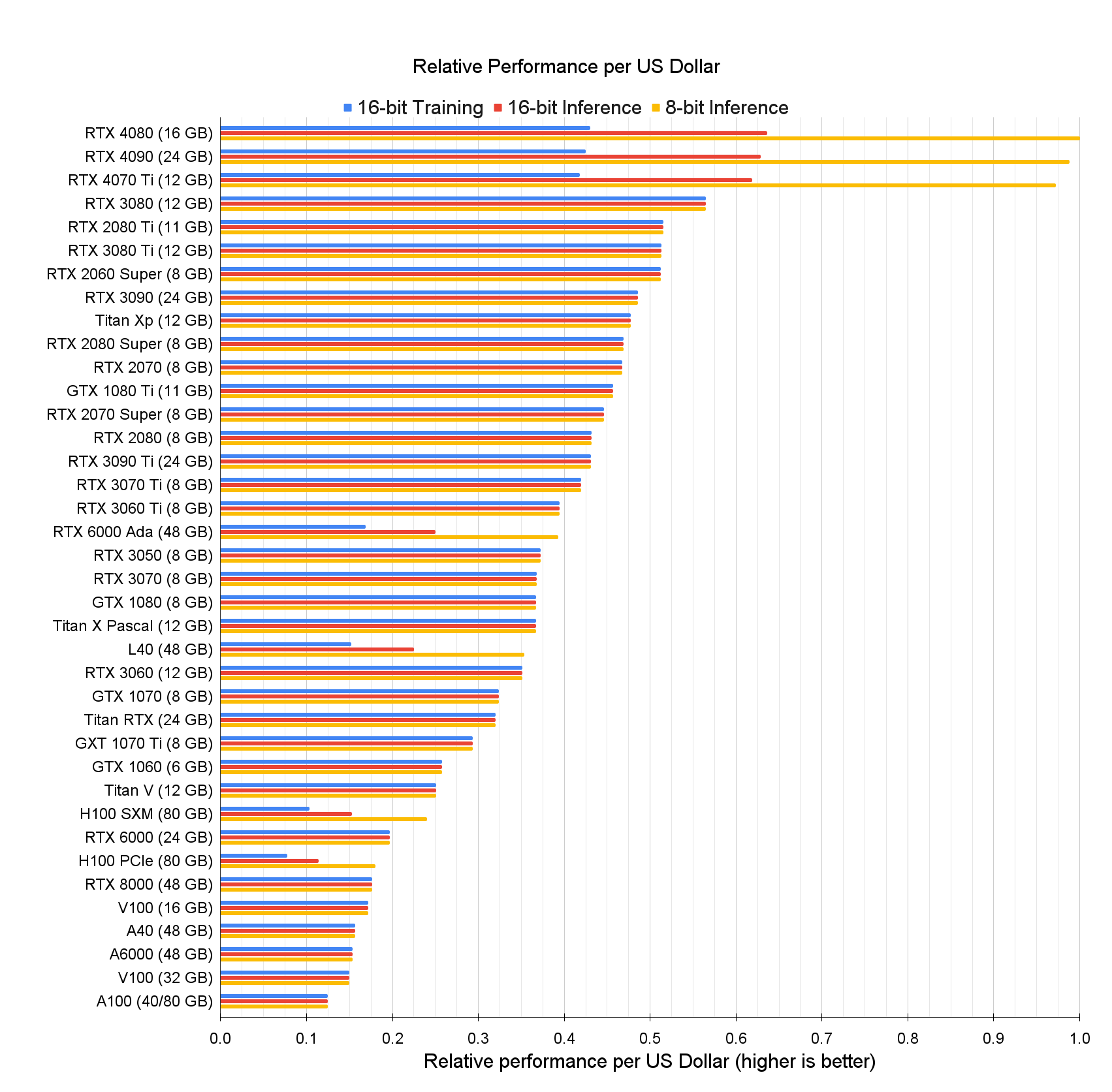

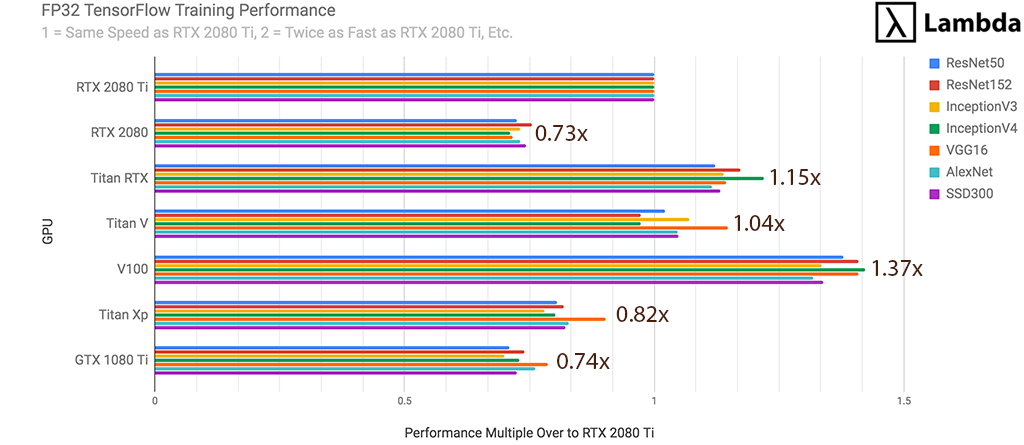

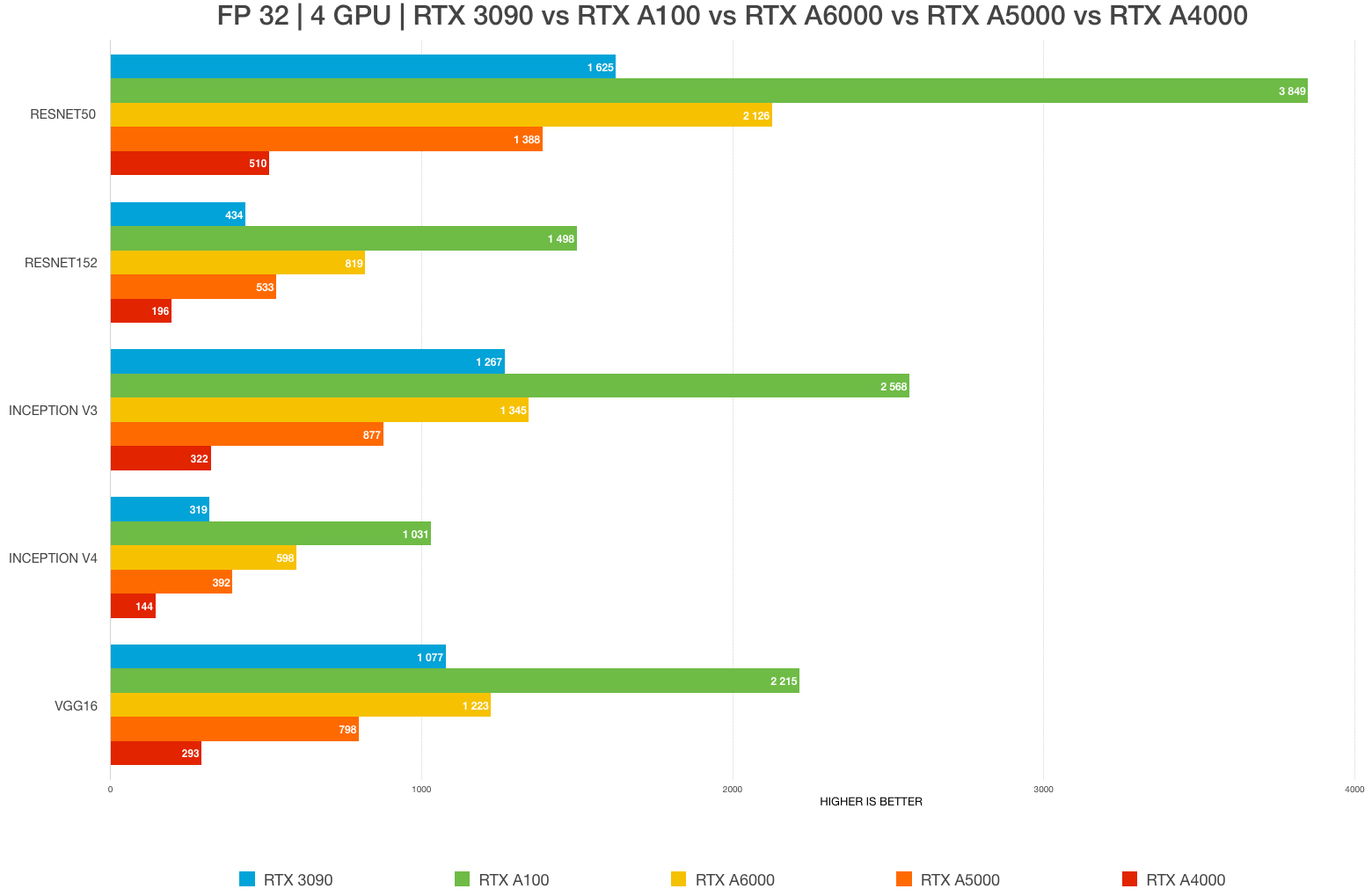

Best GPU for AI/ML, deep learning, data science in 2023: RTX 4090 vs. 3090 vs. RTX 3080 Ti vs A6000 vs A5000 vs A100 benchmarks (FP32, FP16) – Updated – | BIZON

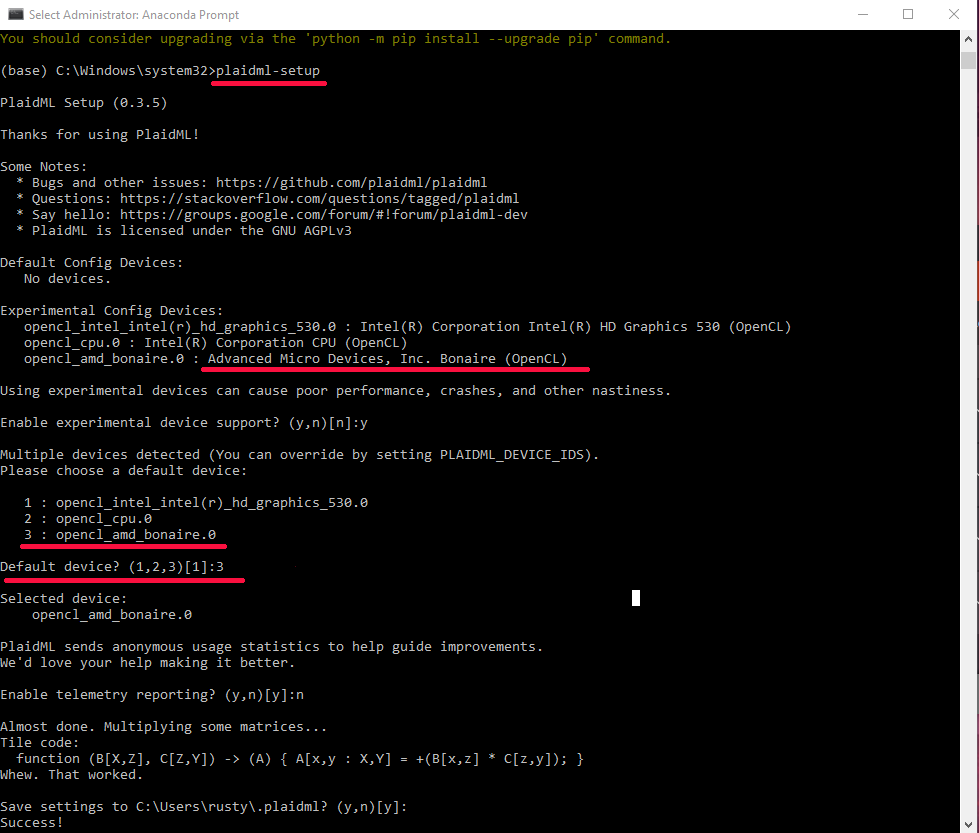

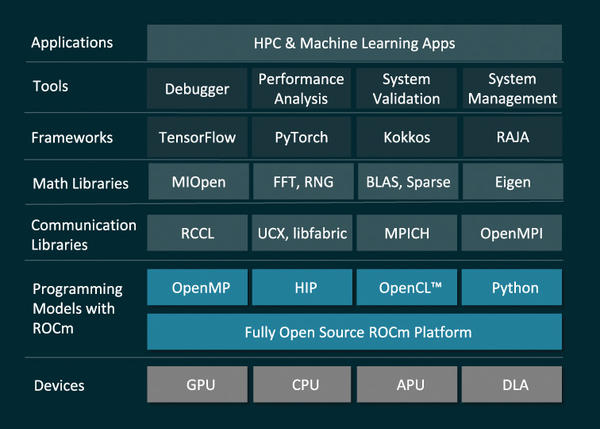

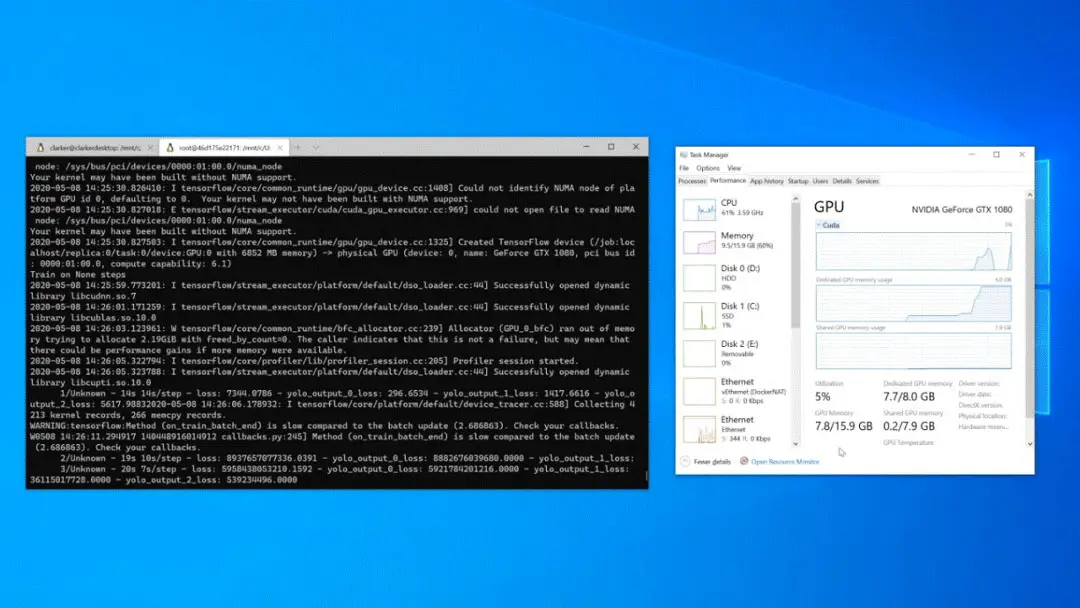

Is it necessary to have NVIDIA graphics to get started with TensorFlow? What can AMD users do? - Quora

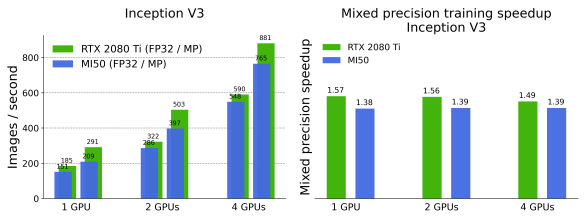

Performance comparison of image classification models on AMD/NVIDIA with PyTorch 1.8 | SURF Communities

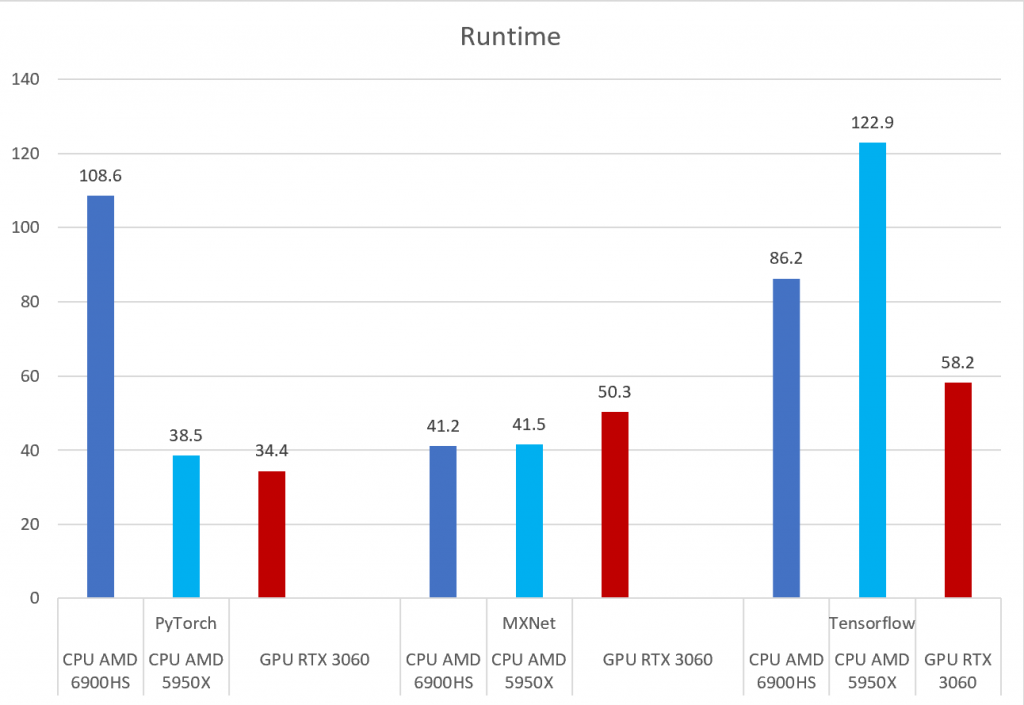

PyTorch, Tensorflow, and MXNet on GPU in the same environment and GPU vs CPU performance – Syllepsis